Considérations relatives à la conception des pièces de châssis de serveur découpées au laser

J'ai vu de nombreux projets de châssis de serveurs qui semblaient parfaits à l'écran et qui se sont effondrés dans l'atelier. Le modèle est ennuyeux. Mauvaise logique de tolérance. Géométrie d'aération paresseuse. Acier choisi par habitude. Revêtement ignoré jusqu'à la fin. Cet article aborde le sujet sous l'angle des éléments essentiels qui influent sur le rendement, l'ajustement, les propriétés thermiques et le coût.

Tiny mistakes compound.

I’ve watched teams obsess over laser specs, quote turnaround, and sheet price while ignoring the boring geometry that actually decides whether a server chassis goes together cleanly, cools properly, and survives pilot build without three operators standing around with files, shims, and that grim look people get when a “simple enclosure” starts eating margin. Then everyone acts surprised.

Why?

Because here’s the ugly truth: a lot of server sheet metal is still designed like office equipment from ten years ago, even though the market moved on fast, rack power is up, AI server demand has bent lead times and expectations, and the penalty for a sloppy hole pattern or a lazy bend callout now shows up much earlier in the program. According to Reuters, Foxconn said in May 2024 that strong AI server demand was driving revenue expectations, and Supermicro’s January 2024 forecast came with reported 71% sequential growth tied to AI server momentum. That doesn’t describe a forgiving environment. It describes a pressure cooker.

And yes, the thermal side is now inseparable from the mechanical side. Uptime Institute’s 2024 survey says average rack densities are increasing, remain below 8 kW on average, and that nearly a third of operators report rapid growth in rack power for recent deployments. So if someone still treats the chassis as “just the box,” I’d frankly worry about the rest of the program too.

Server sheet metal stops being “simple” the minute you scale it

Trois éléments sont importants.

Repeatability. Thermals. Assembly forgiveness.

That’s it—and also not it, because each of those buckets hides ten failure modes. I’ve seen flat patterns look beautiful in review meetings and then turn nasty on the line because nobody really asked the obvious questions: what moves, what mates, what floats, what gets coated, what gets torqued, what gets reworked when the board revision arrives two weeks late and suddenly the rear I/O opening is off by just enough to ruin your afternoon?

But the industry has already shown where this goes. Open Compute’s 2024 DC-MHS work published a common chassis design to handle differing host processor module thicknesses and connector offsets, including explicit discussion of chassis gaps, rail guides, spacer use, and different rear I/O wall openings. Read that again. The people doing serious open hardware work are literally designing around variation as a first-order issue. They are not pretending one neat DXF solves everything. (opencompute.org)

C'est important.

Because a server enclosure is not a decorative shell. It’s a mechanical system with knock-on effects everywhere: airflow impedance, EMI behavior, grounding continuity, cable bend radius, serviceability, fan wall stiffness, rail engagement, latch force, coating buildup, and field replacement logic. People forget that. Or worse, they know it and still rush it.

If the board can still shift, the chassis probably isn’t ready

I know that sounds blunt, but I’m not going to soften it. From my experience, one of the fastest ways to destroy a good NPI schedule is to lock chassis geometry before the board outline, connector stack, and service clearances are really frozen. The CAD may be “released.” The design is not.

Open Compute’s April 2024 chassis work didn’t stay vague either. It referenced 1U chassis assumptions around 42.8 mm overall height and spacer strategies to absorb variation across mounting situations. That’s what real mechanical thinking looks like. Not vibe. Not hope. Controlled accommodation.

And once you’re thinking like that, natural server chassis traceability marking workflows stop looking optional. They become part of the build logic—serial control, revision tracking, service ID marks, internal bracket identification, all the unglamorous stuff that saves you when five similar parts start circulating in assembly totes.

Tolerances are where most chassis programs quietly bleed money

Most drawings lie.

Not because the CAD is wrong. Because the tolerance intent is fuzzy, overgeneralized, or just copied from the last project. I’ve seen global notes slapped onto prints like bandages—nice and clean until the formed parts arrive and suddenly the rear ports don’t line up, the tray sits stressed, the PEM location drifts into trouble, and someone says the vendor had “bad consistency.” Maybe. But maybe the print was lazy.

NIST’s guidance on sheet metal still says something people should have tattooed on the wall: sheet metal manufacturing tolerance is bilateral, and specifying only gauge without equivalent decimal thickness is bad practice because stock sizes vary and unilateral assumptions often depart from standard practice. That sounds basic. It still gets ignored all the time.

So here’s my bias. I don’t trust a server chassis drawing that only says “18 ga” and leaves the rest to interpretation. Call out thickness in millimeters. Separate cosmetic dimensions from functional ones. Make rear I/O features, rail datums, board mount patterns, and latch interfaces earn their tolerances. Don’t dump the same blanket number across everything because it makes the title block look tidy.

That shortcut gets expensive.

Flat blanks don’t ship. Formed parts do.

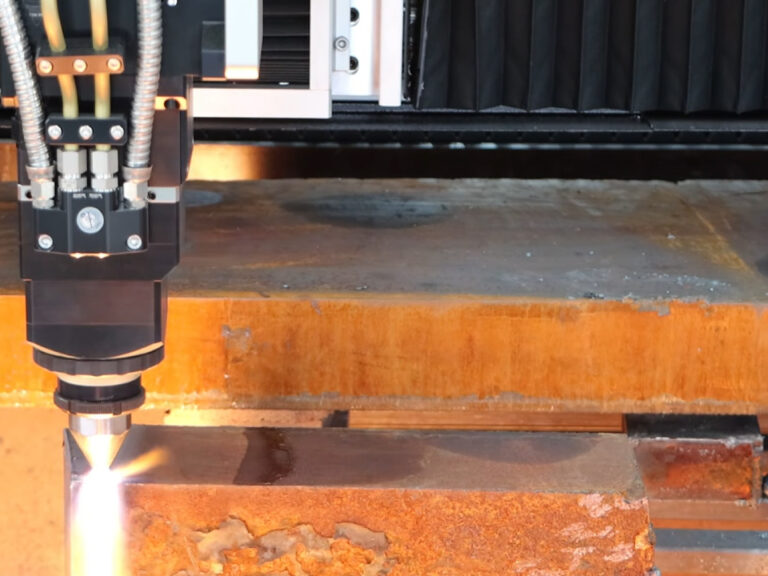

This is where people get burned. They inspect the laser-cut blank, feel smug about the profile accuracy, and then forget that the product the customer sees—and the assembly team fights with—is the bent, inserted, coated, stacked-up version. Hole-to-bend relationships. Springback. Flange drift. Relief geometry. Finish buildup. That’s the real part.

And if your motherboard datum depends on a flange that nobody is checking after forming, you’re basically measuring a ghost.

| Design area | Typical shortcut | What it causes in production | Better move |

|---|---|---|---|

| Material thickness | Specify gauge only | Unexpected fit drift across vendors | Call out decimal thickness and allowable range |

| Rear I/O cutout | Tolerance as cosmetic opening | Port misalignment, screw stress, EMI headaches | Tie opening to board and connector datums |

| Fan wall / vent field | Maximize open area blindly | Noise, weak panels, turbulent flow | Balance stiffness, airflow path, and serviceability |

| Hole near bend | Use flat pattern spacing only | Hole distortion or tool conflict | Define bend relief and hole-to-bend minimums |

| Coated surfaces | Ignore finish buildup | Tight latches, poor grounding, rub marks | Include coating stack in mating logic |

| Mixed SKUs | Unique chassis for each board | Inventory bloat, NPI delays | Use modular common chassis strategy where possible |

I also think too many teams delay process questions that should come early—especially around surface preparation before coating or grounding. That usually ends the same way: a late-stage argument about finish quality, conductivity, adhesion, or contamination that should have been settled before the RFQ ever went out.

Material choice is usually less glamorous than people want

Steel wins often.

That’s not sexy. It’s just true. Cold-rolled steel keeps winning in mainstream server enclosure work because it gives you a workable mix of stiffness, forming consistency, cost control, grounding behavior, and finish compatibility. Aluminum has a place—sure—but it changes more than weight. It changes stiffness assumptions, fastening decisions, thread strategy, dent behavior, and how forgiving the build is when technicians get a little aggressive.

Stainless? Sometimes. But I honestly think it gets overused by teams trying to look “premium” before they’ve solved the actual enclosure problems. Harsh, maybe. Still my view.

And the vent pattern debate—let’s talk about that for a second. More holes do not automatically mean better cooling. That’s lazy thinking. Airflow depends on pressure path, fan curve, obstruction, internal packaging, and how the whole chassis breathes under load. Uptime’s 2024 data makes the broader point pretty clear: rack power isn’t standing still, and higher-density deployments are becoming more common. That means your vent geometry is no longer a cosmetic detail buried in the mechanical drawing. It’s part of the thermal strategy whether you like it or not.

My unpopular view on vent fields

Bigger isn’t smarter.

I’ve seen huge decorative perforation zones that looked fantastic in renderings and then created flimsy panels, noisy airflow, weird coating behavior, and extra headaches around EMI and handling. What matters is not max open area on paper. What matters is controlled airflow with enough panel integrity left to survive manufacturing and service.

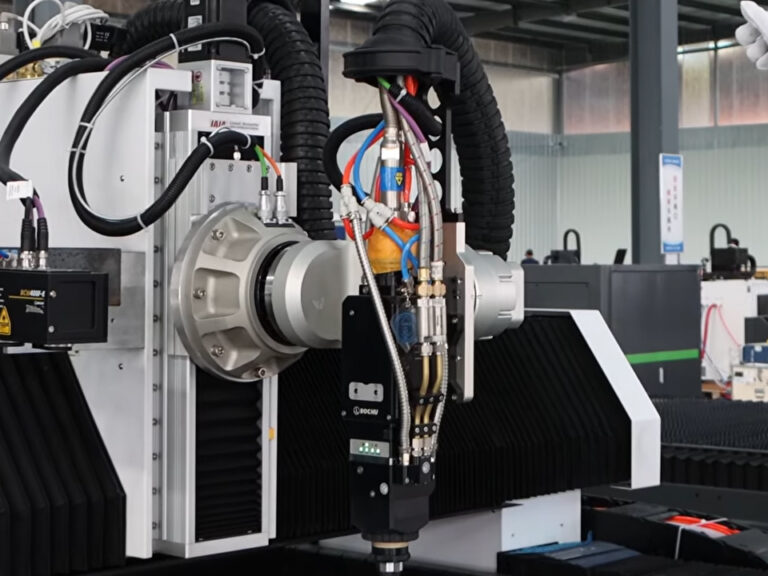

That’s why process-aware shops matter. Even a page like metal laser engraving and cutting know-how can still reinforce something useful here: feature quality, edge behavior, marking precision, and what happens when fine details stop being decorative and start affecting function.

AI servers changed the chassis conversation whether people admit it or not

Look at the volume signals.

In January 2024, Reuters reported that Supermicro’s forecast reflected 71% sequential growth, with analysts tying the jump to generative AI server demand. In May 2024, Reuters reported Foxconn remained confident that strong AI server demand would drive revenue that year. And in September 2024, Reuters reported Lenovo planned annual production in India of 50,000 AI rack servers and 2,400 GPU servers. Stack those three together and the message is pretty obvious: server hardware is moving faster, regionalizing faster, and scaling harder.

So what happens to chassis design under that kind of pressure?

It stops being a one-revision problem. Now you need family logic. Shared rails. Adaptable cutouts. Clear marking zones. Controlled tolerance chains. Service access that still works when the product line mutates six months later. If you build every new board around a one-off box, you’re building future pain into the business model.

That’s the part many teams don’t want to hear.

Design it as a family, not as a one-night stand

Yes, I said it.

Common brackets where possible. Rail features that don’t need reinvention every SKU. Connector windows designed for adaptation instead of desperate patch edits. Real cable-bend clearance. Zones for serialization, compliance marks, and service labels. And if you’re dealing with coated or mixed-material subassemblies, disciplined UV marking for small precision features and codes starts to make more sense than people think.

Because once the program scales, the small stuff isn’t small anymore.

The design checklist I trust more than a glossy render

I keep it ugly.

Meaning practical. Meaning I care more about the first pilot build than the prettiest screenshot in the deck.

What I’d want frozen before RFQ

- Final board outline and connector keep-outs

- Real fan and PSU envelope data

- Coating stack assumptions

- Grounding path requirements

- Flatness and cosmetic zones

- Critical datums for rear I/O, rails, and mounting points

- SKU family logic for common vs unique parts

What I’d ask the supplier before I trust the quote

- How are formed parts inspected, not just flats?

- What’s the real hole-to-bend capability?

- What burr and edge standard is being held?

- How is coating buildup handled in fit-critical zones?

- Will the first-article package include mating-part photos?

- What happens after pilot build if assembly finds pain points?

What I would never leave vague

- Decimal thickness

- Functional tolerances

- Datum references

- Finish callouts

- Hardware insertion method

- Marking and traceability zones

If a shop gets fuzzy on those answers, I don’t really care how attractive the unit price looks. Cheap quotes have killed plenty of decent programs.

FAQ

What are laser-cut server chassis parts?

Laser-cut server chassis parts are precision sheet-metal components used to build the structural and functional frame of a server enclosure, including trays, brackets, covers, fan walls, drive cages, PSU mounts, and rear I/O panels, all produced to support fit, airflow, grounding, servicing, and repeatable assembly. In real-world factory terms, these are the parts that decide whether a server goes together cleanly or turns into a rework magnet. The laser matters, yes—but the real outcome depends on material thickness, bend control, coating stack, and datum discipline.

How should engineers set tolerances for server chassis parts?

Tolerance strategy for server chassis parts should separate cosmetic dimensions from function-critical mating features, define clear datums, specify decimal material thickness, and account for bending and coating effects instead of treating the flat blank as the finished product. I’d work backward from what has to mate cleanly—rear I/O zones, rail interfaces, board mounts, latch features, fan walls—not from generic drafting habits. If every feature gets the same tolerance note, the drawing looks neat but the product won’t behave neatly. NIST’s bilateral stock guidance is still worth reading for that reason.

What is the best material for laser-cut server chassis parts?

The best material for laser-cut server chassis parts is usually cold-rolled steel for mainstream server production because it balances stiffness, forming stability, cost, EMI behavior, and finish compatibility better than most alternatives in volume builds. But there’s no magic answer. Aluminum may make sense when weight or corrosion shifts the tradeoff. Stainless works in narrower cases, though I think it gets chosen too casually. Material choice should come from structural targets, grounding strategy, finish requirements, and service conditions—not habit.

Votre prochaine étape

Here’s my recommendation.

Take your current drawing pack and force every dimension into one of three buckets: function-critical, assembly-supporting, or cosmetic. Do it with your mechanical engineer, your manufacturing person, and someone who has actually stood on the pilot line. If that meeting gets messy, good. It’s supposed to.

Because that mess is real.

Then tie every critical feature to an inspection method and a mating condition. Not theory. Not “should be okay.” Actual assembly logic. And if you’re building a content cluster around this subject, I’d connect this article naturally to server part identification and serialization, surface prep before finishing or bondinget precision laser behavior on metal features.

Because that’s where the real split is in this industry.

Not who talks best. Who ships clean metal—at volume—without turning every pilot run into a rescue mission.